Company Bought An AI Machine To Answer Internal Questions, And It Malfunctions So Bad It’s Funny

Lately, we’ve been hearing how AI is going to take over the world. Scientists are trying to create various models that could be implemented in many areas of life. And their effort isn’t fruitless. Artificial intelligence is already implemented in quite a lot of places. For instance, in the workplace, AI models are “hired” to do certain jobs, like answering questions for employees. Unfortunately, it doesn’t always work the way it’s coded, just like in today’s story.

More info: Reddit

Some say that one day, artificial intelligence might take over the world. But some of the current AI models prove that day is not today

Image credits: Hatice Baran (not the actual photo)

A company bought an expensive AI model to help employees with any questions they might have

Image credits: Ankit Rainloure (not the actual photo)

Image credits: Matheus Bertelli (not the actual photo)

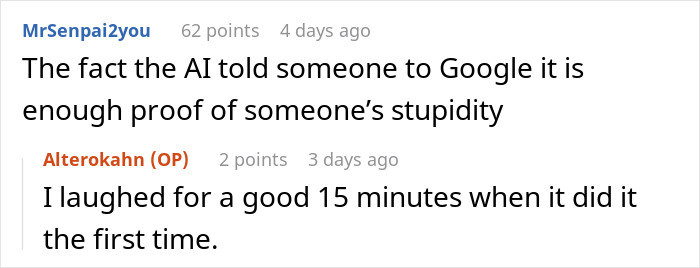

Image credits: Alterokahn

But the AI model ended up malfunctioning so badly that instead of employees asking it questions, they asked questions about it, as the model started giving made-up information

To justify layoffs, the OP’s company purchased an expensive ChatGPT-based AI chat model with no name to answer internal questions the employees had.

A few well-known examples of AI models meant for answering questions are Google Assistant, Amazon Alexa, and Apple Siri. Of course, it should be noted that these examples aren’t extensive by any means, and with the recent AI craze, there are a lot of new models coming into work nearly every day.

Coming back to the story, the information given to the AI was very specific, so the model started telling people it wasn’t sure about the results. Shortly after it started, employees began to simply “Google It.” And that’s not even the end. According to the post’s author, now the model simply makes stuff up and ignores the original questions.

The OP talked with the engineers in charge of the model, and they explained that the model has a list of 5-10 top-rated documents it bases its answers on. If none of these documents have a straightforward answer, the AI model starts “hallucinating” and mismatching pieces together.

Part of it could be solved by providing gap-filling documentation, but the problem with this is that the troubles appear due to the way the questions are being asked. And since the company has employees all around the world, it’s possible some employees aren’t forming questions in English. So, the company is left stuck with a self-hating AI model.

Some of the netizens found this situation funny and even made jokes like, “This AI entered its teenage years,” or they expressed how they felt kind of jealous of it, as it can simply tell people to Google the information they need. A few were just simply interested in what made the model malfunction this way.

Image credits: Lukas (not the actual photo)

But AI models can be used for way more than just answering questions. In fact, AI is so ingrained in our lives that we don’t even realize it. For example, nearly all social media platforms use AI in some capacity. When you see recommendations of accounts you would like or simply when the platforms display your feed according to what you’re interested in.

Basically, it’s the same thing with Netflix — it uses AI to improve its content recommendations. With online stores, AI-based algorithms are used to predict, for example, what customers might want to buy. Again, the AI field is already so broad, and it’s growing every day, so it’s hard to keep track of every single thing in our lives that AI is in charge of.

Yet, experts voice that AI shouldn’t be trusted blindly — after all, it’s still a machine. It can be unpredictable, act immorally, or the algorithms it’s based on can be unclear.

In the future, it’s possible that artificial intelligence will evolve enough to overcome these flaws, but then other challenges will arise, such as the models potentially taking away jobs from people. These models even do this right now, or at least attempt to. As we can see in the Reddit story, the AI was bought to justify layoffs, but in the future, the possibility of this might increase.

AI is both useful and unhelpful in many areas, depending on what or by whom it’s being used. At least, right now, it isn’t capable of avoiding burnout, so we can probably feel safe in our jobs, at least for a little while. And what awaits in the future even AI itself can’t predict right now.

Some internet folks thought this whole situation was hilarious, while others were interested in why such a smart technology started acting so stupidly

Poll Question

Thanks! Check out the results:

People don't understand that AI isn't really intelligent. It's just an algorithm propped on a database. It doesn't understand the data it's given. It doesn't know what any of the data is, it just follows it's protocols and if those reach a dead end, it randomises. On the surface it appears smart, but as soon as you start working with it, you quickly reach it's limits, and that will never change. Even if you could upload all the data from a human brain into its database, that wouldn't change a thing. Even a golden retriever actually knows more and is smarter than any given AI. They just can't make it 'alive'. It can't understand anything. And you can't program understanding. It's the difference between rare data and true, organic intelligence. No matter how much you know, that's not truly a measure of your intelligence. There are hard limits on what a computer can do, and we've reached them years ago. Every new 'breakthrough' of the last years was unreliable and turned out as mere fluff

“AI” language models routinely make stuff up because they’re just advanced predictive text engines and don’t actually *know* anything. If you try to use it to retrieve information you’re not capable of fact checking yourself, you’re always at risk of receiving nonsense.

So many people are desperate for work, and yet here we are. Using these stupid, worthless pieces of metal!🤦🏾♀️

I love the comment from jfgallay about the AI is it named Marvin then the Op comment about it hating its life. Cracked me up. If nobody else made a comment about its name being g Marvin, I was going to. Just in case nobody knows the reference, this is reference to a series of books called The Hitchhiker's Guide to the Galaxy. In ti there is a robot named Marvin and it is paranoid and hates his life.

"Based on the article, do you believe AI will significantly improve in the future?" Not being used like that, it won't. As it is with all things technical: Garbage In = Garbage Out. They are stupid people making it do stupid things. I'm sorry, excuse me; not stupid, but unimaginative, out-of-touch executives using AI to replace actual people trapped in a dead-end, thankless job. No, it won't save the world or destroy art. AI is just another tool that can be used for better things than crappy customer service. If only we had imaginative people that would use it to do something different, something important, something better.

It's called bleeding edge tech for a reason. You heamorrhage cash mostly, but it also stabs and stings you in many other ways.

Are you sure it doesn't have a name? Sounds very mcuh like it might be called Marvin.

That joke was already made in the comments in the article.

Load More Replies...People don't understand that AI isn't really intelligent. It's just an algorithm propped on a database. It doesn't understand the data it's given. It doesn't know what any of the data is, it just follows it's protocols and if those reach a dead end, it randomises. On the surface it appears smart, but as soon as you start working with it, you quickly reach it's limits, and that will never change. Even if you could upload all the data from a human brain into its database, that wouldn't change a thing. Even a golden retriever actually knows more and is smarter than any given AI. They just can't make it 'alive'. It can't understand anything. And you can't program understanding. It's the difference between rare data and true, organic intelligence. No matter how much you know, that's not truly a measure of your intelligence. There are hard limits on what a computer can do, and we've reached them years ago. Every new 'breakthrough' of the last years was unreliable and turned out as mere fluff

“AI” language models routinely make stuff up because they’re just advanced predictive text engines and don’t actually *know* anything. If you try to use it to retrieve information you’re not capable of fact checking yourself, you’re always at risk of receiving nonsense.

So many people are desperate for work, and yet here we are. Using these stupid, worthless pieces of metal!🤦🏾♀️

I love the comment from jfgallay about the AI is it named Marvin then the Op comment about it hating its life. Cracked me up. If nobody else made a comment about its name being g Marvin, I was going to. Just in case nobody knows the reference, this is reference to a series of books called The Hitchhiker's Guide to the Galaxy. In ti there is a robot named Marvin and it is paranoid and hates his life.

"Based on the article, do you believe AI will significantly improve in the future?" Not being used like that, it won't. As it is with all things technical: Garbage In = Garbage Out. They are stupid people making it do stupid things. I'm sorry, excuse me; not stupid, but unimaginative, out-of-touch executives using AI to replace actual people trapped in a dead-end, thankless job. No, it won't save the world or destroy art. AI is just another tool that can be used for better things than crappy customer service. If only we had imaginative people that would use it to do something different, something important, something better.

It's called bleeding edge tech for a reason. You heamorrhage cash mostly, but it also stabs and stings you in many other ways.

Are you sure it doesn't have a name? Sounds very mcuh like it might be called Marvin.

That joke was already made in the comments in the article.

Load More Replies...

Dark Mode

Dark Mode

No fees, cancel anytime

No fees, cancel anytime

42

14